In a discussion on an online forum, a freelance translator in Malaysia believes that he has fewer job opportunities now due to clients turning to AI.

Another individual in the same discussion claims that he was laid off due to his stance against using AI tools in the company.

In a separate conversation, a designer questioned whether he should resign as a sign of protest against his company’s increasing reliance on AI to generate content. Meanwhile, another user who handles accounting tasks at work says AI is taking over his role after the company adopted a new AI-powered processing system. He claims that the system has led to some colleagues being laid off and those who remain will be required to verify the work performed by AI.

In the comments section, other users have advised him to look for a new job elsewhere as he risks being replaced completely, or start showing more productivity in other aspects of his current work that cannot be done by AI.

These conversations found online reflect a growing concern among Malaysian workers as AI tools become a part of work.

Staying ahead

According to an Ipsos AI Monitor 2025 survey involving 500 Malaysian adults, 63% fear AI’s potential to replace their current job within the next three to five years.

“The fear of being replaced by AI is very real, and it’s completely valid,” says Edvance CEO Razin Rozman.

Fahad encourages employees to experiment with various generative AI tools to discover how they can boost productivity at work. — Randstad Malaysia

Fahad encourages employees to experiment with various generative AI tools to discover how they can boost productivity at work. — Randstad Malaysia

Randstad Malaysia country director Fahad Naeem says findings from his company’s Malaysia Employer Brand research, which surveyed 2,588 respondents, show that 5% now expect to lose their jobs due to AI.

“Despite this, the overall sentiment towards AI remains largely positive, as 48% of Malaysian workers said that AI has improved their job satisfaction this year,” adds Fahad.

According to NTT Data CEO Henrick Choo, the best way to navigate the fear of being replaced by AI is to embrace lifelong learning and adaptability. He says that he has seen employees transition from traditional support roles to newly-created positions in AI operations, product testing and customer success – often within just a few months.

“Focus on roles that rely on uniquely human skills like empathy, decision-making, critical thinking, and creativity which are areas where AI still lags behind,” says Choo in a statement to LifestyleTech.

His advice to individuals would be to start investing in digital fluency by learning to work alongside AI tools, adding that they should embrace continuous learning and stay updated on the latest tools, trends as well as governance practices.

“AI is not here to replace people, but to augment their capabilities. The most successful professionals will be those who understand how to leverage AI tools while asking the right questions about data ownership, ethical use, and value distribution,” adds Choo.

Razin shares that individuals who have successfully adapted to the rise of AI often share key qualities such as adaptability, curiosity, and a mindset geared toward continuous learning. He also believes that basic AI literacy is becoming essential in the work place regardless of whether an employee is in a technical role.

Razin says those who have successfully adapted to the rise of AI usually have a mindset geared toward continuous learning. — Edvance

Razin says those who have successfully adapted to the rise of AI usually have a mindset geared toward continuous learning. — Edvance

“We’ve seen many success stories, people who were once in roles like administrative support or basic data entry, who, through upskilling, moved into project management, digital marketing, or even junior AI operations roles.

“What helped them stand out was the learning itself and the mindset shift. They saw AI not as the end of their role, but the beginning of a new one,” says Razin.

As for Fahad, he encourages employees to experiment with various generative AI tools to discover how they can boost productivity at work. He says exposure and experiences can help employees gain a deeper understanding of AI’s potential and limitations to anticipate how their roles might change.

“With the increasing integration of AI, talent should discuss with their managers how their career pathway may change. This involves identifying areas for deepening specialisation, mapping out training opportunities and having a pulse on how job responsibilities may evolve with increasing digital and AI disruption,” adds Fahad.

Fahad says the company’s 2025 Workmonitor report involving 503 respondents in Malaysia shows that 53% of talents trust their employers to invest and provide opportunities for continuous learning particularly in AI and technology. He adds that 56% of responders trust their employers to be transparent about business decisions that will impact their role.

“It is clear that while employers are excited about rolling out AI-powered tools and solutions, they should also be transparent and forthright about how AI will transform the company’s operations and processes, and more importantly, how it will impact the employees’ job security and career prospects,” says Fahad.

Redefining work roles

Experts share that the impact of AI may be more nuanced than just simply replacing people at work.

“Yes, we are definitely seeing AI reshape job functions in Malaysia though it’s less about outright replacement and more about redefinition,” says Choo.

Choo says the next five to 10 years will mark the rise of ‘hybrid intelligence’ where humans and AI collaborate as equal partners. — NTT Data

Choo says the next five to 10 years will mark the rise of ‘hybrid intelligence’ where humans and AI collaborate as equal partners. — NTT Data

Razin shares a similar sentiment, where he says the company is also starting to see signs of generative AI changing the shape of the labour market in Malaysia.

“At this moment in time, we’re witnessing more of its impact on job transformation than full-on replacement,” he says.

Razin adds that repetitive or process-driven roles are being partially replaced or heavily supported by AI tools. He cites examples like some companies turning to AI to automate customer service by using chatbots or to perform document sorting or data entry.

According to Choo, Gen-AI powered chatbots and voicebots are now able to handle “up to 90% of fact-based customer service queries”, reducing the need for large call centre teams.

Razin adds that his company is also seeing changes in sectors like marketing, finance, education and tech services.

“These industries are adopting generative AI to speed up routine work, which means job scopes are evolving,” says Razin, adding that some local banks have started automating things like loan processing and compliance checks.

“So, rather than cutting jobs, they’re moving people into new roles that focus on oversight and analysis,” says Razin.

A 2024 national study by TalentCorp reveals that around 620,000 jobs – equivalent to 18% of formal sector roles in Malaysia – are expected to be significantly impacted by AI, digitalisation, and the green economy within the next three to five years.

The 72-page report highlights 14 roles including incident investigator, cloud administrator, and applications support engineer as among those on the High Impact list. It also listed 51 roles on the Medium Impact list such as IT audit manager, customer experience manager and data centre operations engineer.

In an article published by the World Economic Forum in June, Human Resources Minister Steven Sim highlights the report’s findings and says: “Workers currently in these roles require cross-skilling, upskilling or even reskilling.”

Why AI?

The machine may be better than people for specific tasks at work. Fahad says AI-based solutions are capable of processing large volumes of data and look at established patterns or past history to perform repetitive tasks. The key here, he says, is that AI is able to do so with better accuracy and consistency.

“These tools are highly applicable in tasks that require standardisation, speed, and scale. AI systems can also operate round-the-clock, which increases outcomes and greatly reduces time and cost,” Fahad adds.

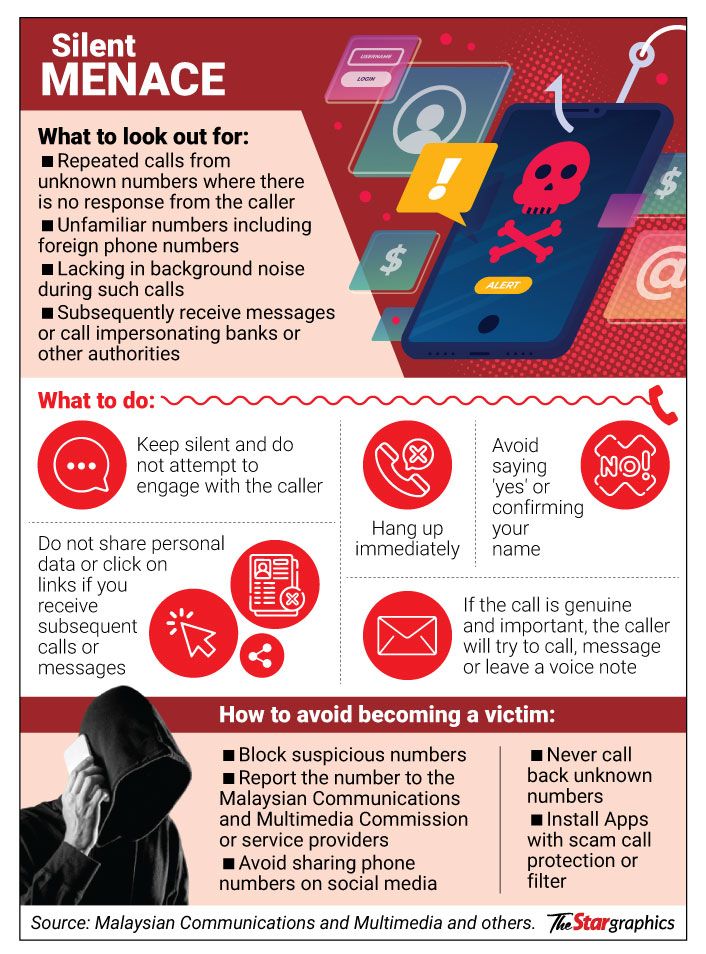

According to an Ipsos AI Monitor 2025 survey involving 500 Malaysian adults, 63% fear AI’s potential to replace their current job within the next three to five years. — This visual is human-created, AI-aided

According to an Ipsos AI Monitor 2025 survey involving 500 Malaysian adults, 63% fear AI’s potential to replace their current job within the next three to five years. — This visual is human-created, AI-aided

Choo explains that tasks that would normally take human teams days to do – such as fraud detection, code generation or content summarisation – can now be completed by AI in a shorter amount of time.

“Generative AI, in particular, is a strong performer when applied to structured domains: drafting documents, generating marketing visuals and videos, producing basic code, and summarising reports,” Choo says, adding that these tools operate best when provided with clear inputs and boundaries, making them highly viable in predictable scenarios.

Apart from processing huge volumes of data, Razin says advanced AI solutions are also capable of spotting trends across complex datasets and are capable of continuously learning through feedback loops.

What the future brings

Prime Minister Datuk Seri Anwar Ibrahim has made it known that Malaysia is committed towards becoming a leader in AI and digital transformation in the Asean region.

During the launch of the National AI Office last year, he emphasised that Malaysia must embrace the need for tech-driven change.

“History has shown that industrial revolutions and technological advancements initially sparked anxiety but ultimately created more opportunities. This is why training and digital literacy are critical in equipping our workforce for these changes,” he said in his speech.

As for the challenges inherent in the use of AI, Anwar emphasised in an Aug 18 report by The Star that Malaysians have to face the hurdles head-on by emphasising humanistic values and critical thinking.

“We must not only focus on developing expertise but also on nurturing values,” he explains.

While Malaysia has made meaningful progress through frameworks like the National AI Roadmap and the Digital Economy Blueprint (MyDigital) with initiatives that reflect strong policy intent and direction, Razin says the pace of AI adoption in the workplace is outstripping both skills development and policy execution.

“One of the most urgent gaps is in talent. There’s growing demand for AI-literate professionals such as engineers, data scientists, prompt engineers, and ethics specialists, but education and training systems haven’t yet scaled to meet this demand.

“The workforce also lacks widespread access to affordable, high-quality upskilling pathways that align with the real-world applications of AI,” he adds.

Razin believes for Malaysia to truly thrive in the AI era – policies must be “adaptive, data-informed, and shaped in collaboration with those building and using these tools daily”.

Choo says the next five to ten years will mark the rise of “hybrid intelligence” where humans and AI collaborate as equal partners. He believes new AI-driven roles that have emerged include AI assistant trainers (experts to finetune how AI behaves and communicates) and AI governance leads (to oversee bias, ethics and compliance).

“We see this across every function: marketers using GenAI to personalise outreach, analysts using AI to simulate future scenarios, and engineers working with AI to rapidly prototype innovations. The emphasis will shift from hard skills alone to cross-functional fluency; blending AI literacy with domain expertise,” adds Choo.

In Malaysia, Choo says AI transformation can also be seen in areas like healthtech, smart manufacturing and agritech where roles in digital twin modelling and data privacy are gaining traction.

“The future is not about who gets replaced, but who gets reimagined. With the right support, that can and should include everyone,” he concludes.-- By ANGELIN YEOH

Source link

Related posts: